Engineering Playground

When will we see an AI system listed in the closing credits of your favorite feature-length film? Rick and Mortify is a tool we built using the state-of-the-art in large vision and language models to create never-before-seen episodes of Rick and Morty with minimal human intervention.

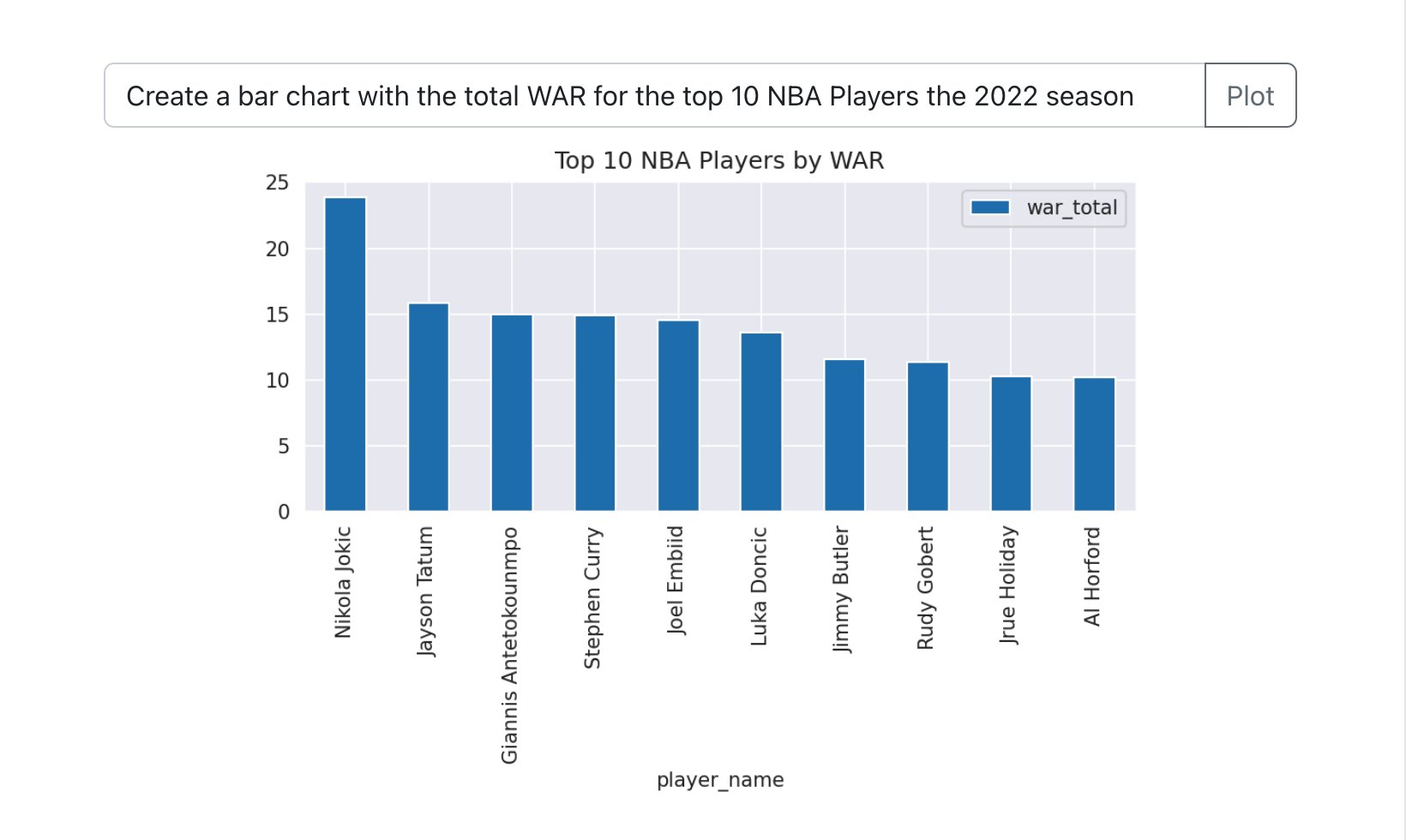

What if you could do any data analysis by just speaking in natural language? AutoPlot is a tool we developed to explore the limits of next generation human interfaces for visually understanding data.

Confetti AI is an educational platform helping people learn the skills to succeed in artificial intelligence careers. It provides a collection of targeted resources and tools to empower the next generation of AI practitioners. We were acquired by Towards AI in 2022.

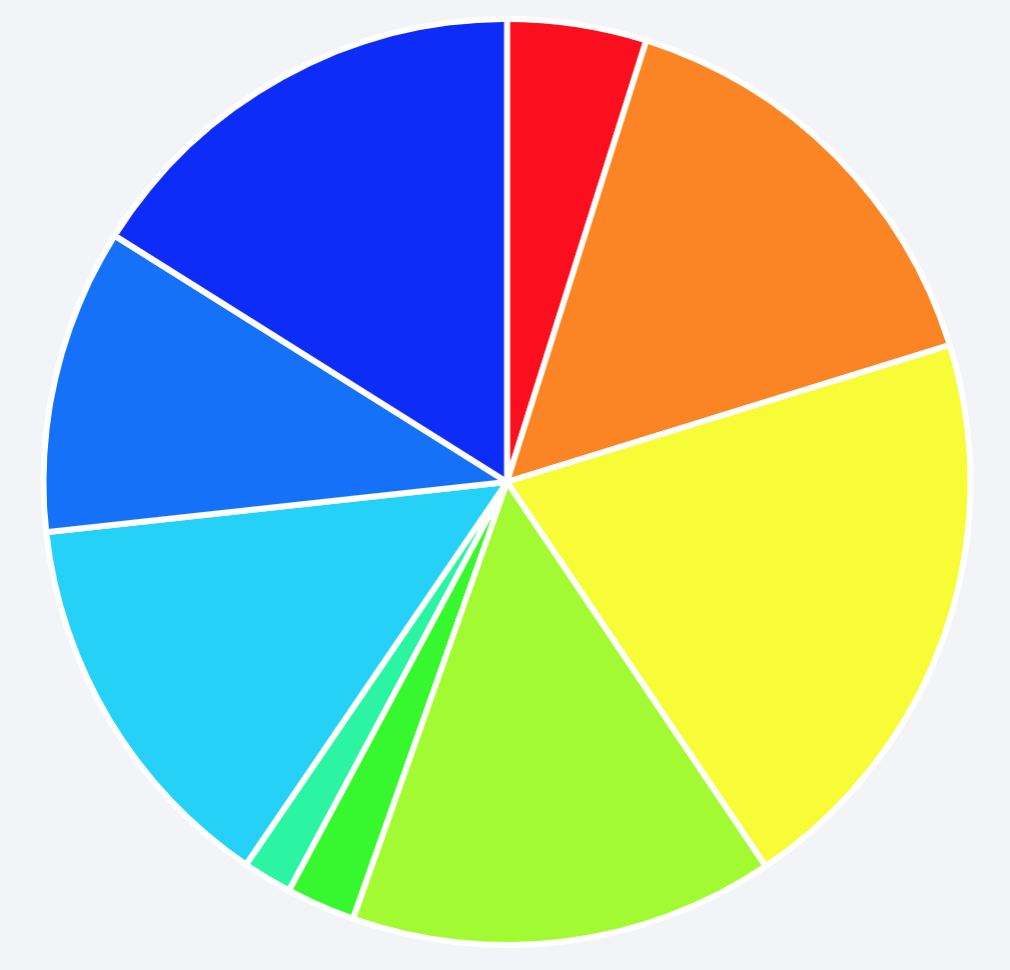

Every year, your hard-earned money gets shuffled to taxes. The purpose of this little project was an attempt to answer the simple question: what does your tax money actually pay for?

AI School is a mobile app designed to teach the basics of artificial intelligence, machine learning, and deep learning. Includes a comprehensive lesson plan for learning fundamental principles.

Publications

I provide an overview of key machine learning concepts that show up in data science and ML interviews. This primer includes core theory as well as practice problems to test your knowledge.

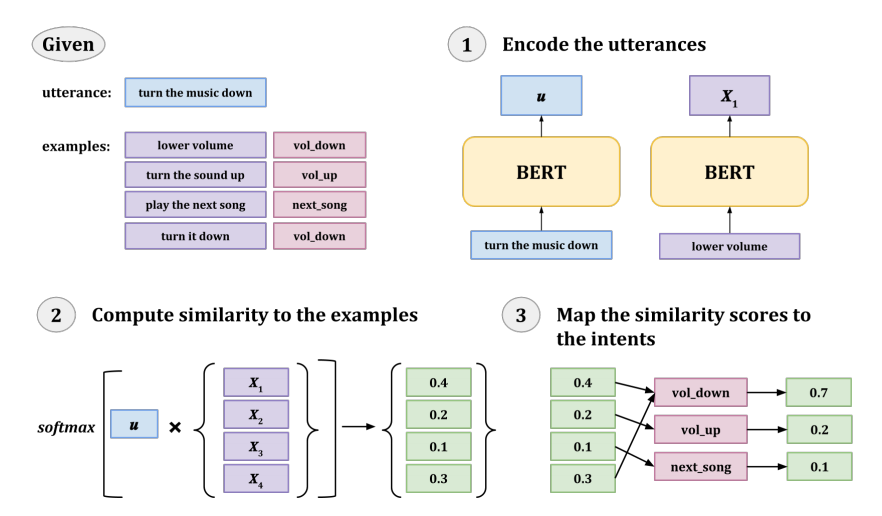

We propose two approaches for improving the generalizability of utterance classification models (example-driven training and observers) that when used in combination achieve state-of-the-art results in full-data and few-shot settings on several intent prediction datasets.

We introduce DialoGLUE (Dialogue Language Understanding Evaluation), a public benchmark consisting of 7 task-oriented dialogue datasets covering 4 distinct natural language understanding tasks. We introduce new models that achieve state-of-the-art results on 5/7 datasets in the benchmark.

The goal of this workshop is to bring together NLP researchers and practitioners in different fields, alongside experts in speech and machine learning, to discuss the current state-of-the-art and new approaches, to share insights and challenges, to bridge the gap between academic research and realworld product deployment, and to shed the light on future directions.

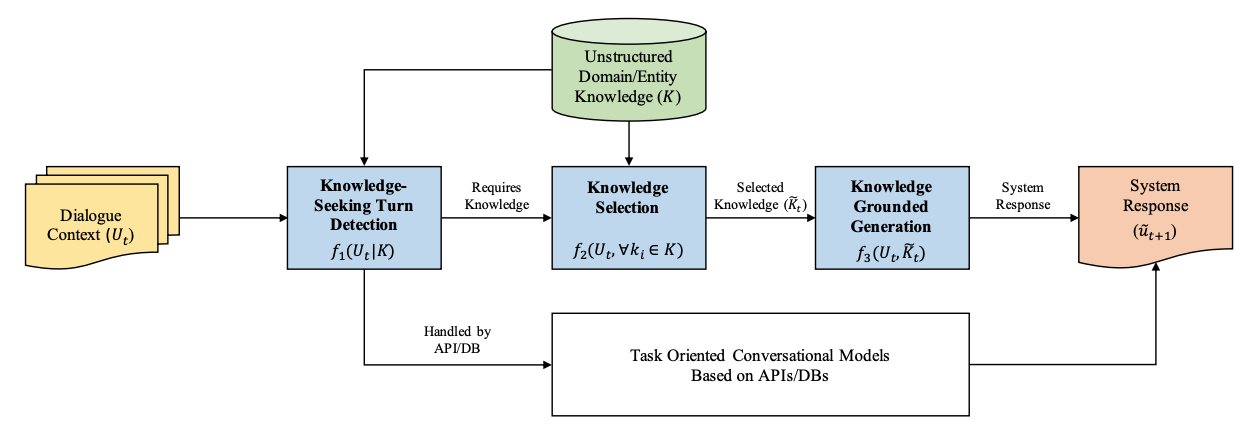

We propose to expand coverage of task-oriented dialogue systems by incorporating external unstructured knowledge sources.

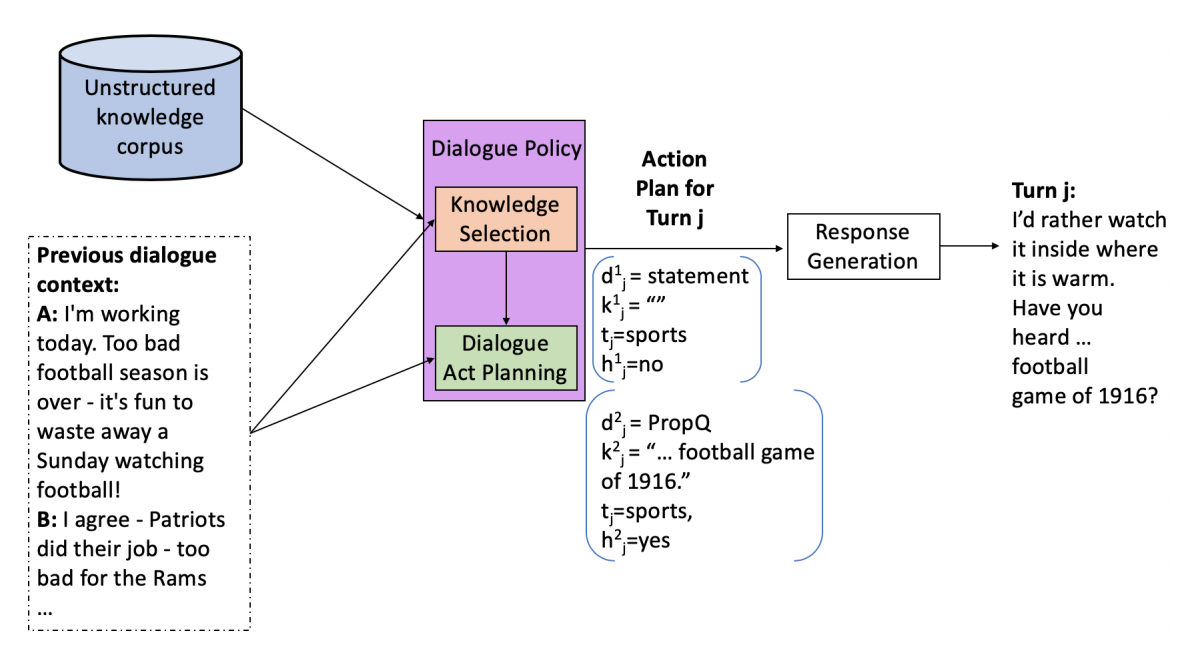

We propose a technique for using a dialogue policy to plan the content and style of target responses in the form of an action plan.

We propose a novel scheme for interactive human-in-the-loop learning, achieving more data-efficient performance on a vision and language task.

We release an updated version of the Cambridge MultiWOZ dataset with dialogue state annotation corrections and corresponding state tracking baselines.

We demonstrate the efficacy of a new neural dialogue agent that is able to effectively sustain grounded, multi-domain discourse through a novel key-value retrieval mechanism.

We show that models with domain-specific grounding can effectively realize the pragmatic reasoning that is necessary for more robust natural language interaction.

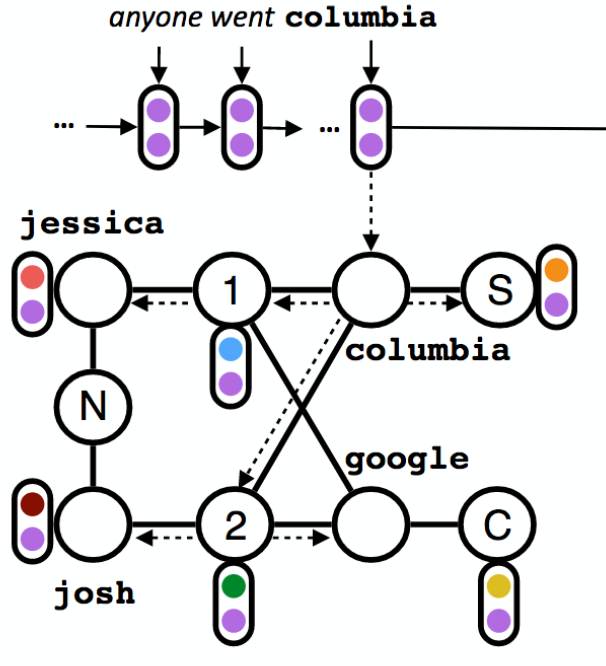

To model both structured knowledge and unstructured language in a novel dialogue setting, we propose a neural model with dynamic knowledge graph embeddings that evolve as the dialogue progresses.

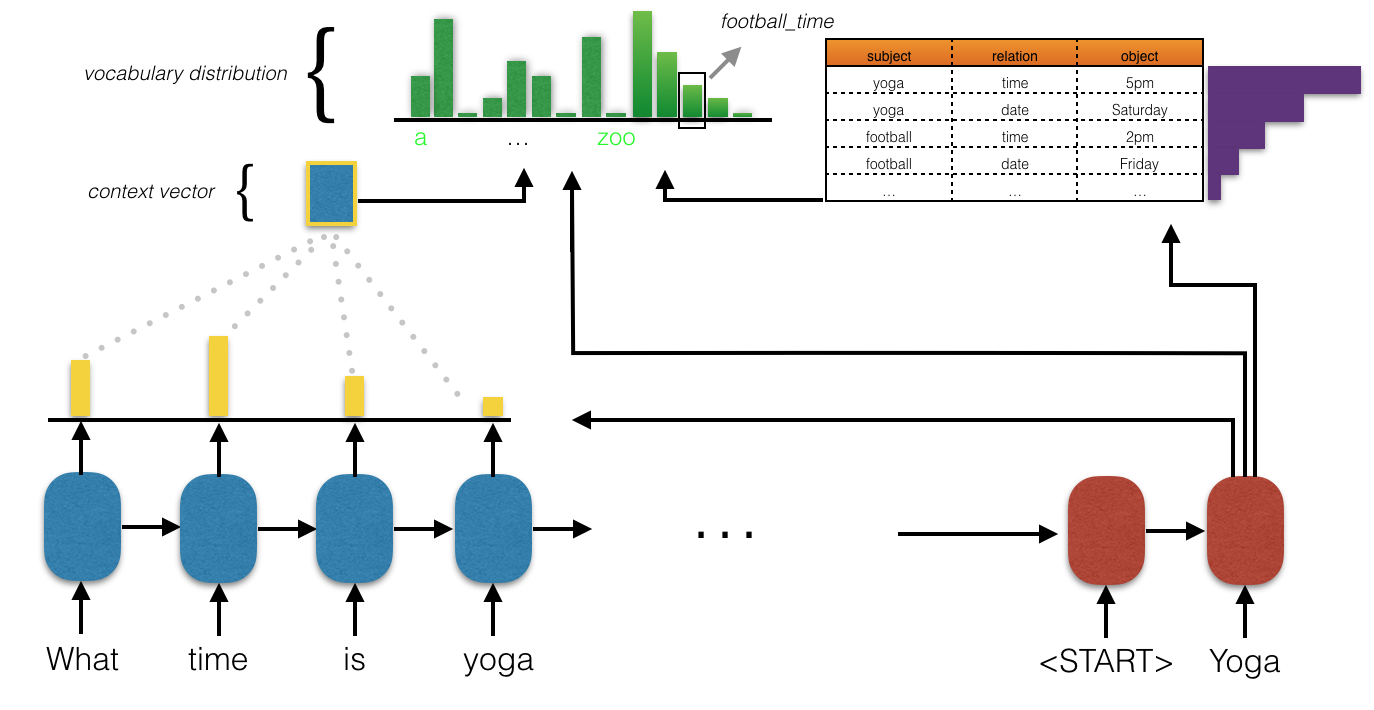

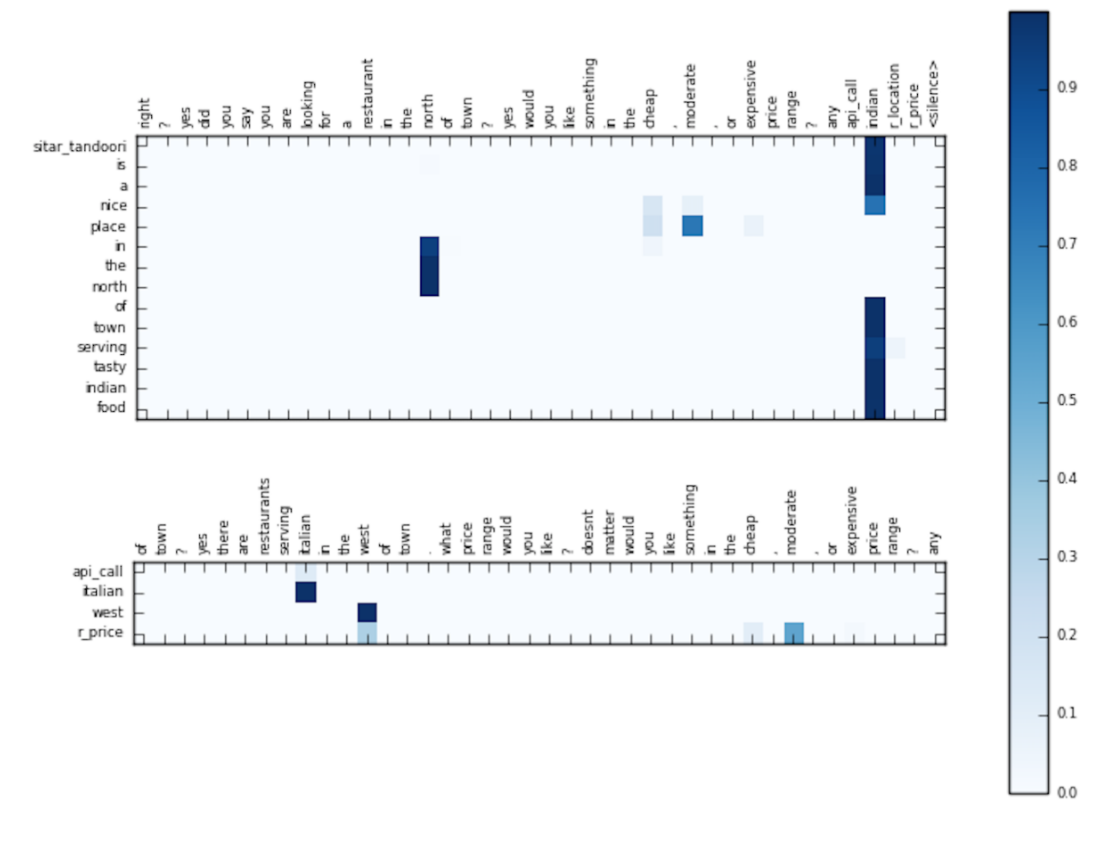

We show the effectiveness of simple sequence-to-sequence neural architectures with a copy mechanism, outperforming more sophisticated models on a standard task-oriented dialogue dataset.

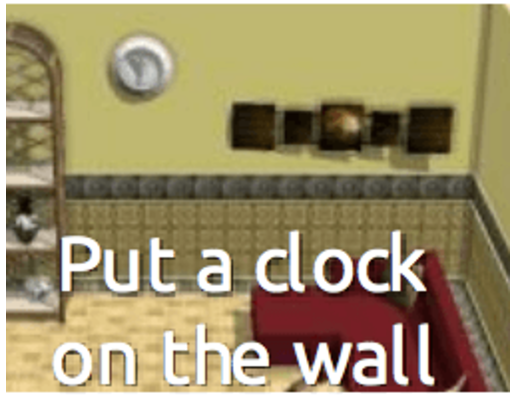

We present SceneSeer: an interactive text to 3D scene generation system with a learned spatial knowledge base that allows a user to design 3D scenes using natural language.

Technical Reports

We investigate neural memory network architectures for the task of natural language inference and propose models for using attention across relevant semantic phrases to inform common sense reasoning.

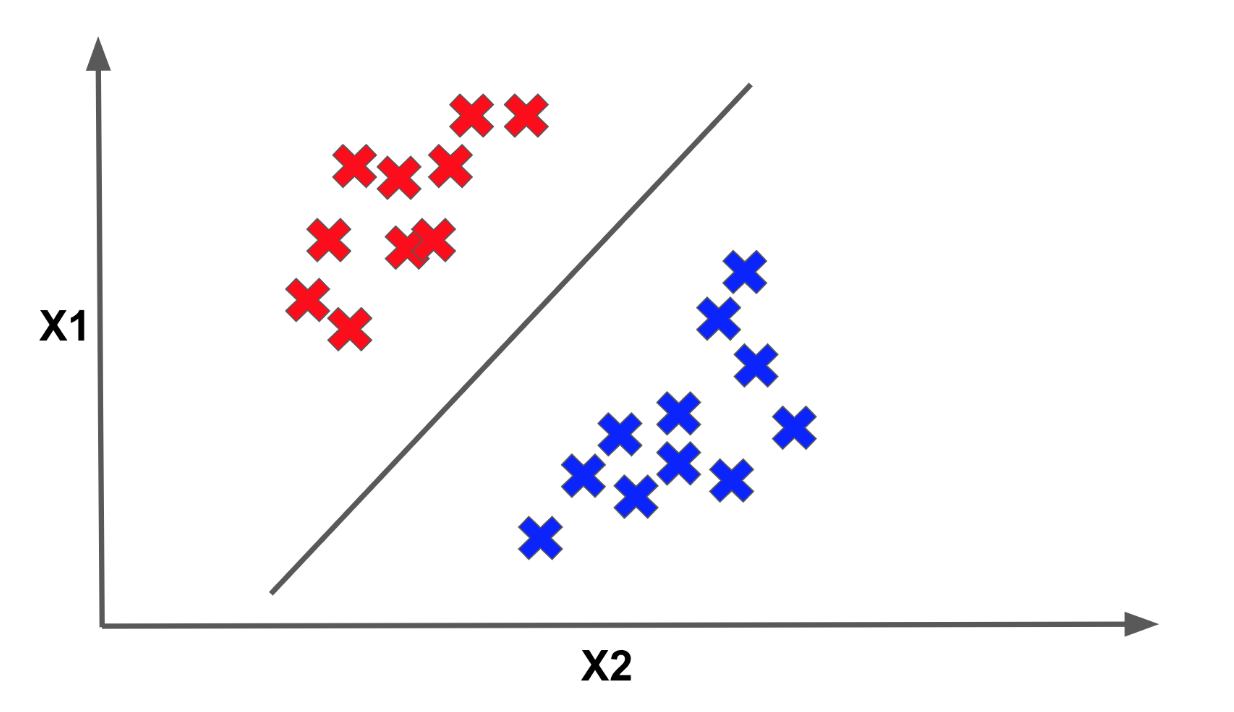

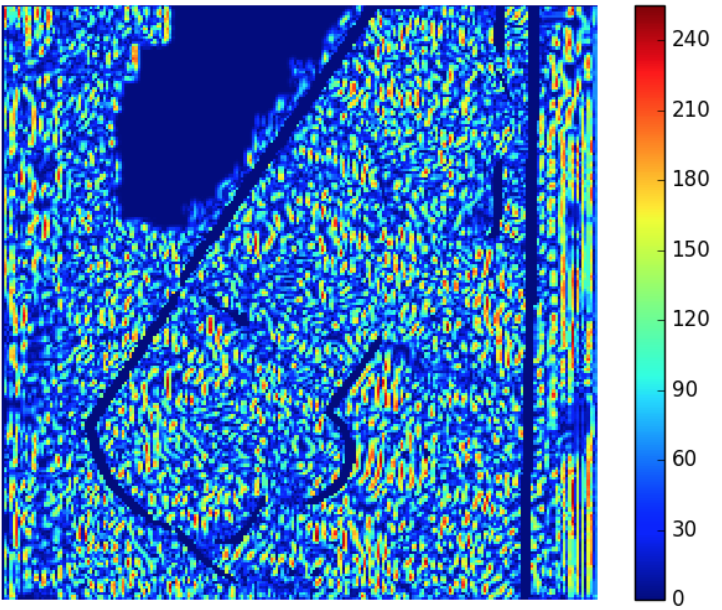

We show a process for visualizing and identifying changes in activations between adversarial images and their regular counterparts and propose a Bayesian framework for improving prediction accuracy on adversarial examples.

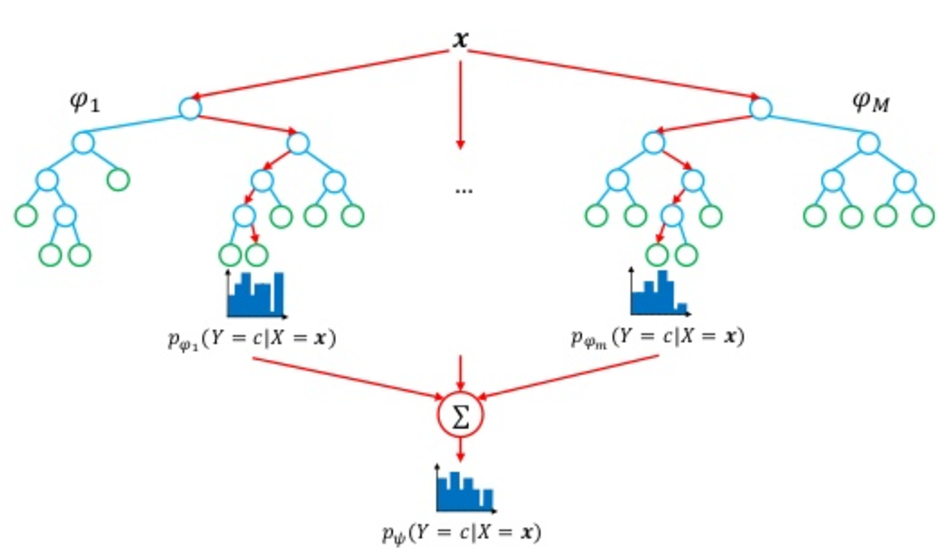

We implement a random forest classifier with a carefully engineered and selected collection of linguistic and semantic features for the task of natural language inference, achieving an F1 of 80.9% on the SemEval-2014 Dataset.